Abstract

We introduce Temporal Residual Jacobians as a novel representation to enable data-driven motion transfer. Our approach does not assume access to any rigging or intermediate shape keyframes, produces geometrically and temporally consistent motions, and can be used to transfer long motion sequences. Central to our approach are two dedicated neural networks that individually predict the local geometric and temporal changes that are subsequently integrated, spatially and temporally, to produce the final animated meshes. The two networks are jointly trained, complement each other in producing spatial and temporal signals, and are supervised directly with 3D positional information. During inference, in the absence of keyframes, our method essentially solves a motion extrapolation problem. We test our setup on diverse meshes (synthetic and scanned shapes) to demonstrate its effectiveness in generating realistic and natural-looking animations on unseen body shapes.

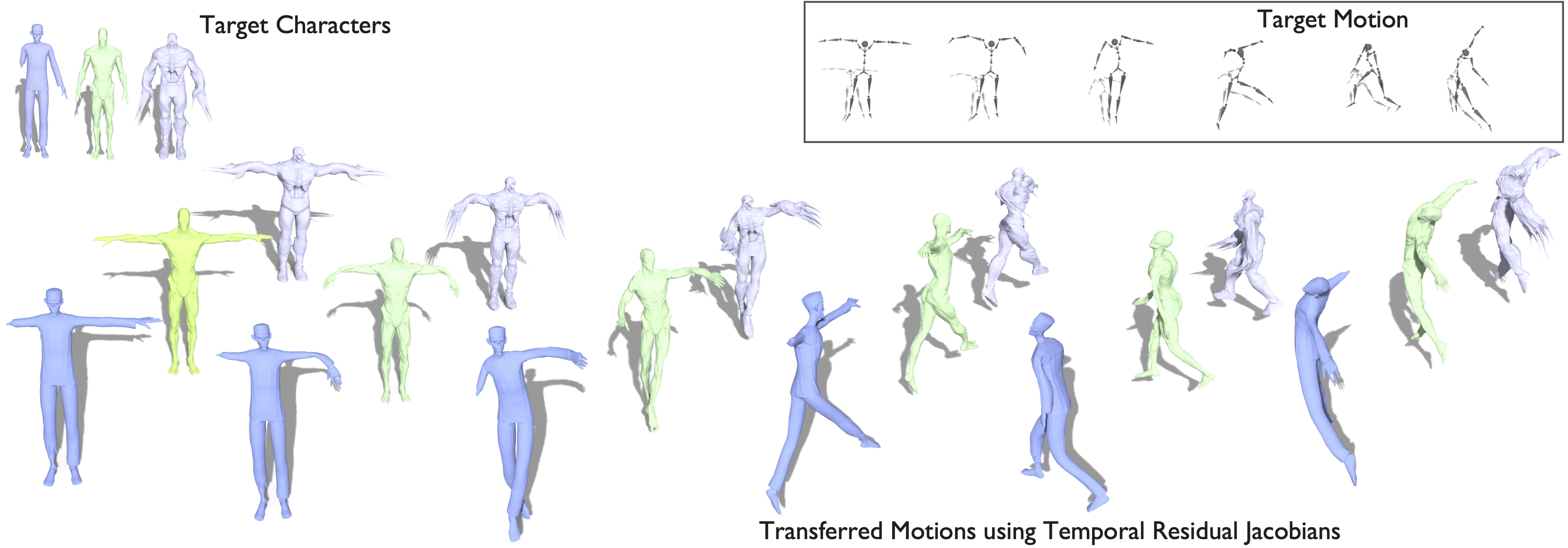

Motion sequences

We showcase our method on a wide variety of sequences from AMASS and humanoid meshes with significantly different shape and topology. All results show transferred motion to an unseen shape without rigging.

Running (in-place)

Number of frames: 278

Punching

Number of frames: 187

Jumping jacks

Number of frames: 460

Notice secondary motion on the chubby man around the chest and belly regions.

Walking

Number of frames: 1845

Dancing

Number of frames: 1088

Generalization beyond humanoids

Temporal Residual Jacobians generalizes to scans (FAUST) and in the wild meshes (Mixamo).

Motion transfer from human to animal

Temporal Residual Jacobians can generalize motion transfer to animals even when it's trained only on humans.

Motion transfer on animals

DeformingThings4D

DeformingThings4D is a synthetic dataset of animations including animals.

COP3D: Common Pets in 3D

COP3D dataset contains in the wild animal reconstructions from consumer devices.

Comparison

Running

In comparison to ours, NJF has jitters and artefacts especially at later time steps.

Limitations

We do not impose physics constraints, therefore, our animation can have self-intersections. An interesting direction is to incorporate constraints for collision detection.

BibTeX

@inproceedings{10.1007/978-3-031-73636-0_6,

author = {Muralikrishnan, Sanjeev and Dutt, Niladri and Chaudhuri, Siddhartha and Aigerman, Noam and Kim, Vladimir and Fisher, Matthew and Mitra, Niloy J.},

title = {Temporal Residual Jacobians for Rig-Free Motion Transfer},

year = {2024},

isbn = {978-3-031-73635-3},

publisher = {Springer-Verlag},

address = {Berlin, Heidelberg},

url = {https://doi.org/10.1007/978-3-031-73636-0_6},

doi = {10.1007/978-3-031-73636-0_6},

booktitle = {Computer Vision – ECCV 2024: 18th European Conference, Milan, Italy, September 29–October 4, 2024, Proceedings, Part LVIII},

pages = {93–109},

numpages = {17},

location = {Milan, Italy}

}